Estás viendo la documentación de Apigee Edge.

Ve a la

documentación de Apigee X. info

Puedes usar Edge Microgateway para proporcionar administración de la API de Apigee para los servicios que se ejecutan en un clúster de Kubernetes. En este tema, se explica por qué podrías querer implementar Edge Microgateway en Kubernetes y cómo hacerlo como servicio.

Caso de uso

Los servicios implementados en Kubernetes suelen exponer APIs, ya sea a consumidores externos o a otros servicios que se ejecutan dentro del clúster.

En cualquier caso, hay un problema importante que resolver: ¿cómo administrarás estas APIs? Por ejemplo:

- ¿Cómo los protegerás?

- ¿Cómo administrarás el tráfico?

- ¿Cómo obtendrás estadísticas sobre los patrones de tráfico, las latencias y los errores?

- ¿Cómo publicarás tus APIs para que los desarrolladores puedan descubrirlas y usarlas?

Ya sea que migres servicios y APIs existentes a la pila de Kubernetes o que creas servicios y APIs nuevos, Edge Microgateway ayuda a proporcionar una experiencia de administración de APIs limpia que incluye seguridad, administración de tráfico, estadísticas, publicación y mucho más.

Ejecuta Edge Microgateway como servicio

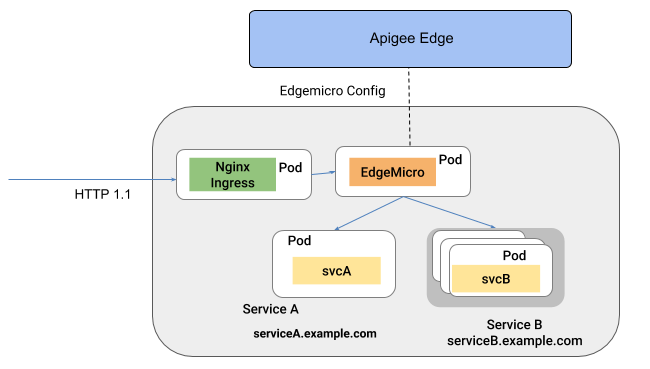

Cuando se implementa en Kubernetes como servicio, Edge Microgateway se ejecuta en su propio pod. En esta arquitectura, Edge Microgateway intercepta las llamadas entrantes a la API y las enruta a uno o más servicios de destino que se ejecutan en otros pods. En esta configuración, Edge Microgateway proporciona funciones de administración de API, como seguridad, estadísticas, administración de tráfico y aplicación de políticas a los otros servicios.

En la siguiente figura, se ilustra la arquitectura en la que Edge Microgateway se ejecuta como un servicio en un clúster de Kubernetes:

Consulta Cómo implementar Edge Microgateway como servicio en Kubernetes.

Próximo paso

- Para obtener información sobre cómo ejecutar Edge Microgateway como servicio en Kubernetes, consulta Implementa Edge Microgateway como servicio en Kubernetes.