You're viewing Apigee Edge documentation.

Go to the

Apigee X documentation. info

Symptom

The client application receives an HTTP status code of 503 with

the message "Service Unavailable" as a response to an API request.

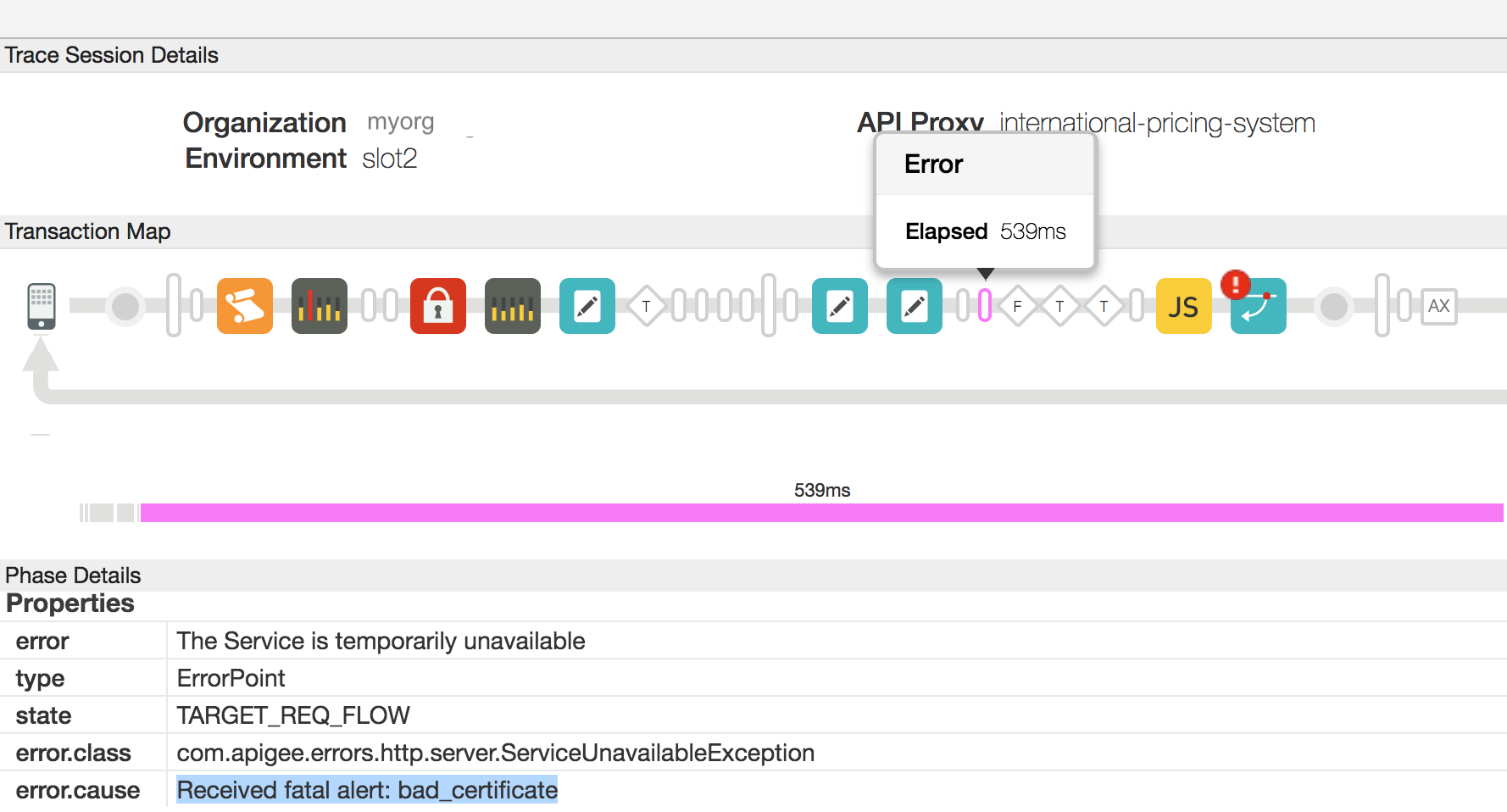

In the UI trace, you will observe that the error.cause

is Received fatal alert: bad_certificate in the Target Request Flow

for the failing API request.

If you have access to Message Processor logs,

then you will notice the error message as Received fatal alert: bad_certificate

for the failing API request. This error is observed during the SSL handshake

process between the Message Processor and the backend server in a 2 way TLS setup.

Error Message

The Client application gets the following response code:

HTTP/1.1 503 Service Unavailable

In addition, you may observe the following error message:

{

"fault": {

"faultstring":"The Service is temporarily unavailable",

"detail":{

"errorcode":"messaging.adaptors.http.flow.ServiceUnavailable"

}

}

}Private Cloud users will see the following error for the specific API request

in the Message Processor logs /opt/apigee/var/log/edge-message-processor/system.log:

2017-10-23 05:28:57,813 org:org-name env:env-name api:apiproxy-name

rev:revision-number messageid:message_id NIOThread@0 ERROR HTTP.CLIENT - HTTPClient$Context.handshakeFailed() :

SSLClientChannel[C:IP address:port # Remote host:IP address:port #]@65461

useCount=1 bytesRead=0 bytesWritten=0 age=529ms lastIO=529ms handshake failed,

message: Received fatal alert: bad_certificate

Possible Causes

The possible causes for this issue are as follows:

| Cause | Description | Troubleshooting Instructions Applicable For |

| No Client Certificate | The Keystore used in the Target Endpoint of Target Server does not have any Client Certificate. | Edge Private and Public Cloud Users |

| Certificate Authority Mismatch | The Certificate Authority of the leaf certificate (the first Certificate in the Certificate chain) in the Message Processor's Keystore does not match any of the Certificate Authorities accepted by the backend server. | Edge Private and Public Cloud Users |

Common Diagnosis Steps

- Enable trace in the Edge UI, make the API call, and reproduce the issue.

- In the UI trace results, navigate through each Phase and determine where the error occurred. The error would've occurred in the Target Request Flow.

- Examine the Flow that shows the error, you should observe the error as shown

in the example trace below:

- As you see in the screenshot above, the error.cause is "Received fatal alert: bad_certificate".

- If you are a Private Cloud user, then follow the instructions below:

- You can get the message id for the failing API request by determining the

value of the Error Header "

X-Apigee.Message-ID" in the Phase indicated by AX in the trace. - Search for this message id in the Message Processor log

/opt/apigee/var/log/edge-message-processor/system.logand determine if you can find any further information about the error:2017-10-23 05:28:57,813 org:org-name env:env-name api:apiproxy-name rev:revision-number messageid:message_id NIOThread@0 ERROR HTTP.CLIENT - HTTPClient$Context.handshakeFailed() : SSLClientChannel[C:IP address:port # Remote host:IP address:port #]@65461 useCount=1 bytesRead=0 bytesWritten=0 age=529ms lastIO=529ms handshake failed, message: Received fatal alert: bad_certificate 2017-10-23 05:28:57,813 org:org-name env:env-name api:apiproxy-name rev:revision-number messageid:message_id NIOThread@0 ERROR HTTP.CLIENT - HTTPClient$Context.handshakeFailed() : SSLInfo: KeyStore:java.security.KeyStore@52de60d9 KeyAlias:KeyAlias TrustStore:java.security.KeyStore@6ec45759 2017-10-23 05:28:57,814 org:org-name env:env-name api:apiproxy-name rev:revision-number messageid:message_id NIOThread@0 ERROR ADAPTORS.HTTP.FLOW - RequestWriteListener.onException() : RequestWriteListener.onException(HTTPRequest@6071a73d) javax.net.ssl.SSLException: Received fatal alert: bad_certificate at sun.security.ssl.Alerts.getSSLException(Alerts.java:208) ~[na:1.8.0_101] at sun.security.ssl.SSLEngineImpl.fatal(SSLEngineImpl.java:1666) ~[na:1.8.0_101] at sun.security.ssl.SSLEngineImpl.fatal(SSLEngineImpl.java:1634) ~[na:1.8.0_101] at sun.security.ssl.SSLEngineImpl.recvAlert(SSLEngineImpl.java:1800) ~[na:1.8.0_101] at com.apigee.nio.NIOSelector$SelectedIterator.findNext(NIOSelector.java:496) [nio-1.0.0.jar:na] at com.apigee.nio.util.NonNullIterator.computeNext(NonNullIterator.java:21) [nio-1.0.0.jar:na] at com.apigee.nio.util.AbstractIterator.hasNext(AbstractIterator.java:47) [nio-1.0.0.jar:na] at com.apigee.nio.NIOSelector$2.findNext(NIOSelector.java:312) [nio-1.0.0.jar:na] at com.apigee.nio.NIOSelector$2.findNext(NIOSelector.java:302) [nio-1.0.0.jar:na] at com.apigee.nio.util.NonNullIterator.computeNext(NonNullIterator.java:21) [nio-1.0.0.jar:na] at com.apigee.nio.util.AbstractIterator.hasNext(AbstractIterator.java:47) [nio-1.0.0.jar:na] at com.apigee.nio.handlers.NIOThread.run(NIOThread.java:59) [nio-1.0.0.jar:na]

The Message Processor log had a stack trace for the error

Received fatal alert: bad_certificate, but does not have any further information that indicates the cause for this issue.

- You can get the message id for the failing API request by determining the

value of the Error Header "

- To investigate this issue further, you will need to capture TCP/IP packets using

tcpdump tool.

- If you are a Private Cloud user, then you can capture the TCP/IP packets on the backend server or Message Processor. Preferably, capture them on the backend server as the packets are decrypted on the backend server.

- If you are a Public Cloud user, then capture the TCP/IP packets on the backend server.

- Once you've decided where you would like to capture TCP/IP packets, use the below tcpdump command to capture TCP/IP packets.

tcpdump -i any -s 0 host <IP address> -w <File name>

If you are taking the TCP/IP packets on the Message Processor, then use the public IP address of the backend server in the

tcpdumpcommand.If there are multiple IP addresses for backend server/Message Processor, then you need to use a different tcpdump command. Refer to tcpdump for more information about this tool and for other variants of this command.

- Analyze the TCP/IP packets using the Wireshark tool or similar tool with which you are familiar.

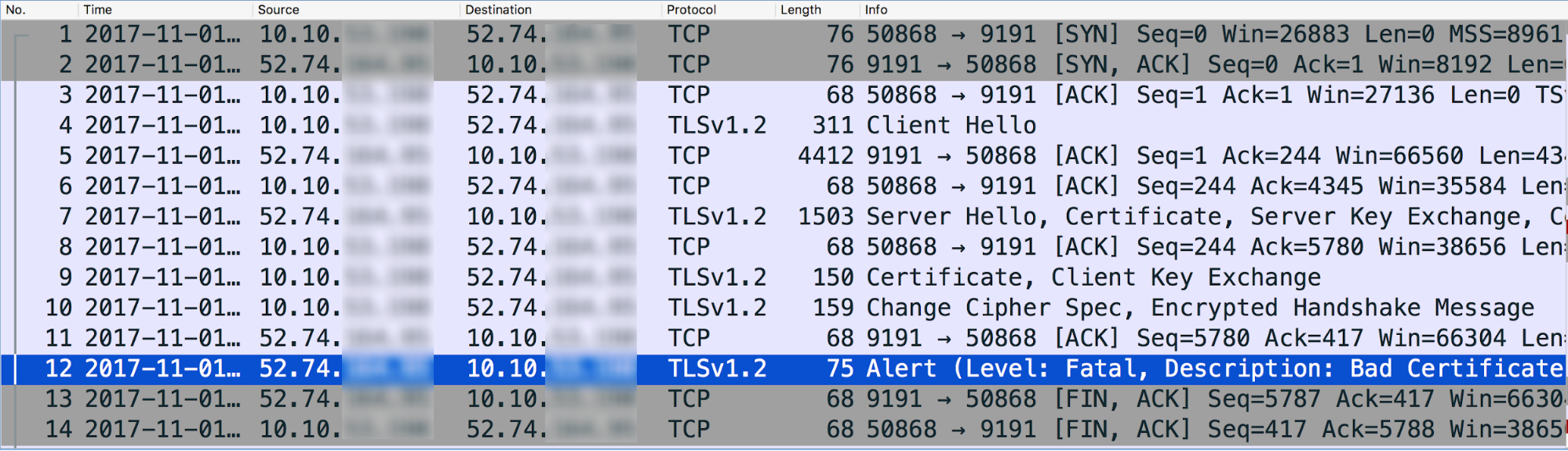

Here's the analysis of sample TCP/IP packets data using Wireshark tool:

- Message #4 in the tcpdump above shows that the Message Processor (source) sent a "Client Hello" message to the backend server (destination).

- Message #5 shows that the backend server acknowledges the Client Hello message from the Message Processor.

- The backend server sends the "Server Hello" message along with its Certificate, and then requests the Client to send its Certificate in Message #7.

- The Message Processor completes the verification of the Certificate and acknowledges the backend server's ServerHello message in Message #8.

- The Message Processor sends its Certificate to the backend server in Message #9.

- The backend server acknowledges the receipt of the Message Processor's Certificate in Message #11.

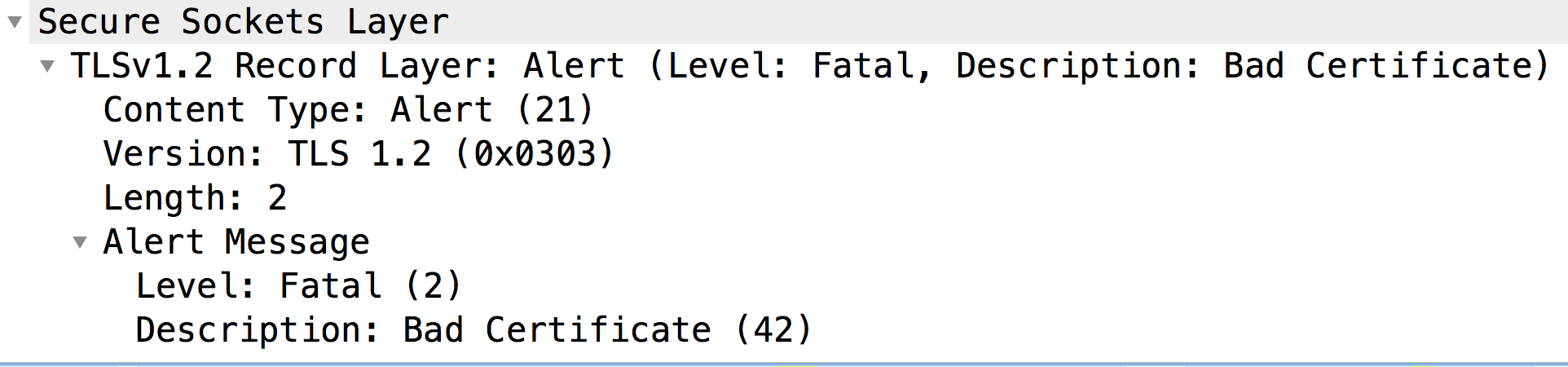

However, it immediately sends a Fatal Alert: Bad Certificate to the Message Processor (Message #12). This indicates that the Certificate sent by the Message Processor was bad and hence the Certificate Verification failed on the backend server. As a result, the SSL Handshake failed and the connection will be closed.

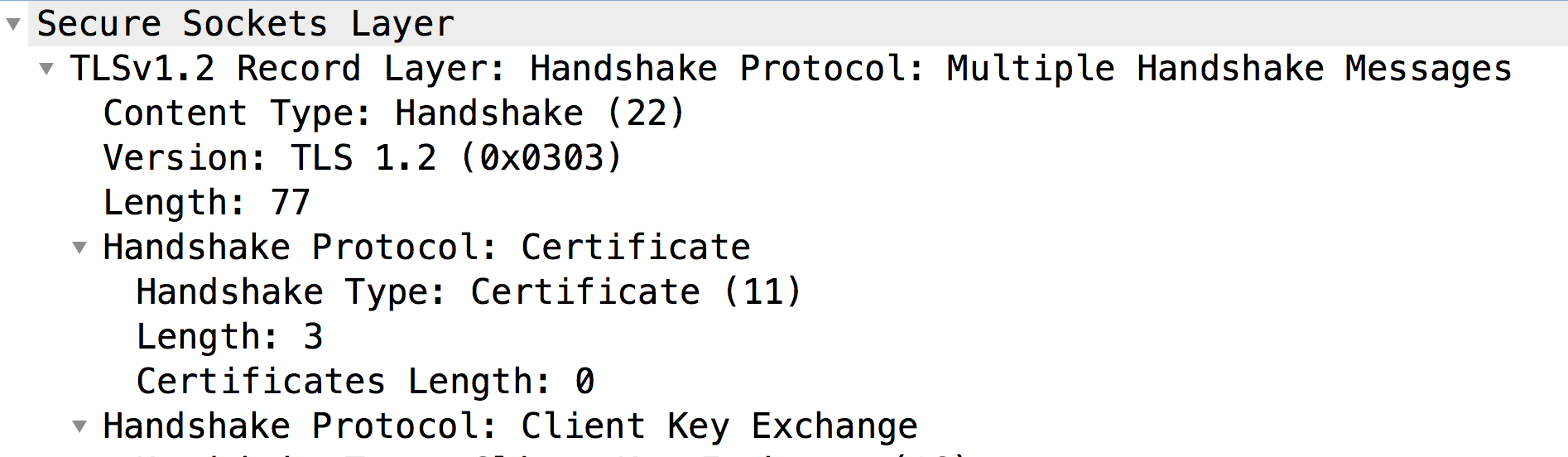

Let's now look at the Message #9 to check the contents of the certificate sent by the Message Processor:

- As you can notice, the backend server did not get any Certificate from the Client (Certificate Length: 0). Hence, the backend server sends the Fatal Alert: Bad Certificate.

- Typically this happens when the Client, that is, Message Processor (a Java based process):

- Does not have any Client Certificate in its KeyStore, or;

- It is unable to send a Client Certificate. This can happen if it can't find a Certificate that is issued by one of the Backend Server's acceptable Certificate Authorities. Meaning, if the Certificate Authority of the Client's Leaf Certificate (i.e., the first Certificate in the chain) does not match any of the backend server's acceptable Certificate Authorities, then the Message Processor will not send the certificate.

Let's look at each of these causes separately as follows.

Cause: No Client Certificate

Diagnosis

If there is no Certificate in the Keystore specified in the SSL Info section of the Target Endpoint or the target server used in the Target Endpoint, then that's the cause for this error.

Follow the below steps to determine if this is the cause:

- Determine the Keystore being used in the Target Endpoint or the Target Server

for the specific API Proxy by using the below steps:

- Get the Keystore reference name from the Keystore element

in SSLInfo section in the Target Endpoint or the Target Server.

Let's look at a sample SSLInfo section in a Target Endpoint Configuration:

<SSLInfo> <Enabled>true</Enabled> <ClientAuthEnabled>true</ClientAuthEnabled> <KeyStore>ref://myKeystoreRef</KeyStore> <KeyAlias>myKey</KeyAlias> <TrustStore>ref://myTrustStoreRef</TrustStore> </SSLInfo>

- In the above example, the Keystore reference name is "myKeystoreRef".

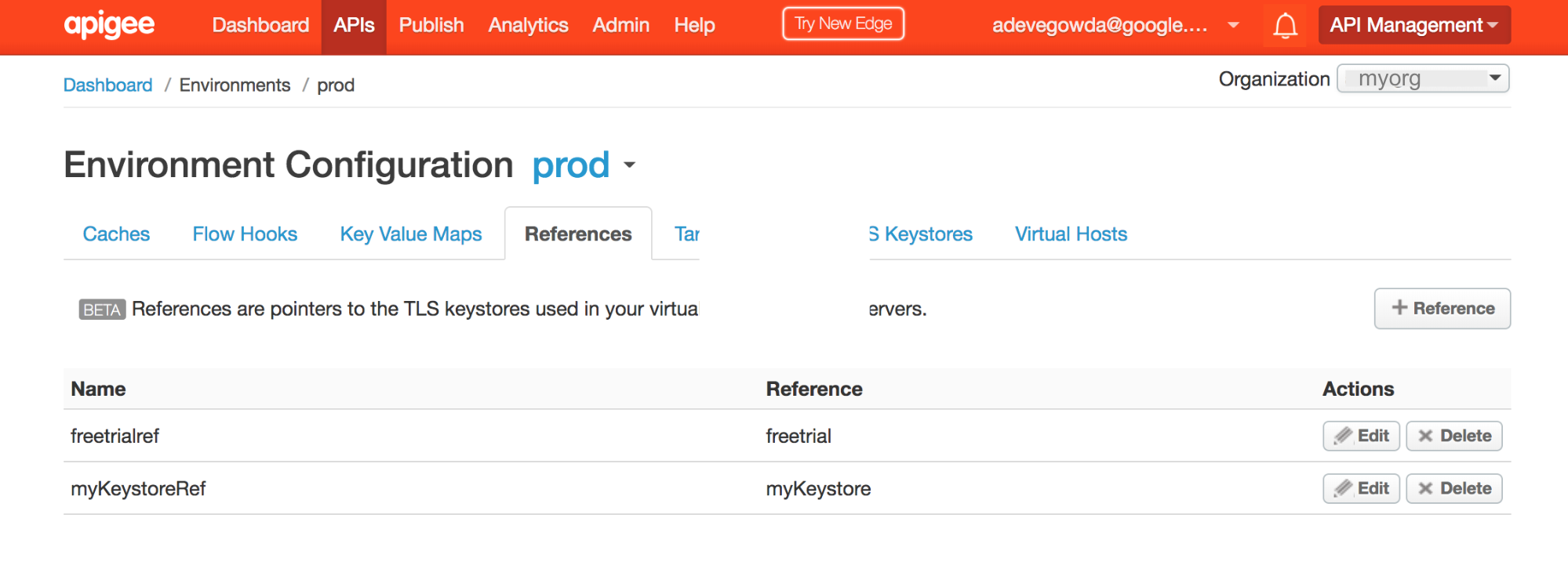

- Go to Edge UI and select API Proxies -> Environment Configurations.

Select the References tab and search for the Keystore reference name. Note down the name in the Reference column for the specific Keystore reference. This will be your Keystore name.

- In the above example, you can notice that myKeystoreRef has the reference to "myKeystore". Therefore, the Keystore name is myKeystore.

- Get the Keystore reference name from the Keystore element

in SSLInfo section in the Target Endpoint or the Target Server.

- Check if this Keystore contains the Certificate either using the Edge UI or the List certs for keystore API.

- If the Keystore does contain Certificate(s), then move to Cause: Certificate Authority Mismatch.

- If the Keystore doesn't contain any Certificate, then that's the reason why the Client Certificate is not sent by the Message Processor.

Resolution

- Ensure the proper and complete Client Certificate chain is uploaded to the specific Keystore in the Message Processor.

Cause: Certificate Authority Mismatch

Generally when the Server requests the Client to send its Certificate, it indicates the set of accepted Issuers or Certificate Authorities. If the Issuer/Certificate Authority of the leaf certificate (i.e., the first Certificate in the Certificate chain) in the Message Processor's Keystore does not match any of the Certificate Authorities accepted by the backend server, then Message Processor (which is a Java based process) will not send the Certificate to the backend server.

Use the below steps to confirm if this is the case:

- List certs for keystore API.

- Get the Details of each Certificate obtained in Step #1 above using the Get cert for keystore API.

- Note down the issuer of the leaf Certificate (i.e., the first Certificate in the certificate chain) stored in the Keystore.

Sample Leaf Certificate

{ "certInfo" : [ { "basicConstraints" : "CA:FALSE", "expiryDate" : 1578889324000, "isValid" : "Yes", "issuer" : "CN=MyCompany Test SHA2 CA G2, DC=testcore, DC=test, DC=dir, DC=mycompany, DC=com", "publicKey" : "RSA Public Key, 2048 bits", "serialNumber" : "65:00:00:00:d2:3e:12:d8:56:fa:e2:a9:69:00:06:00:00:00:d2", "sigAlgName" : "SHA256withRSA", "subject" : "CN=nonprod-api.mycompany.com, OU=ITS, O=MyCompany, L=MELBOURNE, ST=VIC, C=AU", "subjectAlternativeNames" : [ ], "validFrom" : 1484281324000, "version" : 3 } ], "certName" : "nonprod-api.mycompany.com.key.pem-cert" }In the above example, the issuer/Certificate Authority is

"CN=MyCompany Test SHA2 CA G2, DC=testcore, DC=test, DC=dir, DC=mycompany, DC=com" - Determine the backend server's accepted list of Issuers or Certificate Authorities using one of the following techniques:

Technique #1: Use the openssl command below:

openssl s_client -host <backend server host name> -port <Backend port#> -cert <Client Certificate> -key <Client Private Key>

Refer to the section titled "Acceptable Client Certificate CA names" in the output of this command as shown below:

Acceptable client certificate CA names /C=AU/ST=VIC/L=MELBOURNE/O=MyCompany/OU=ITS/CN=nonprod-api.mycompany.com /C=AU/ST=VIC/L=MELBOURNE/O=MyCompany/OU=ITS/CN=nonprod-api.mycompany.com

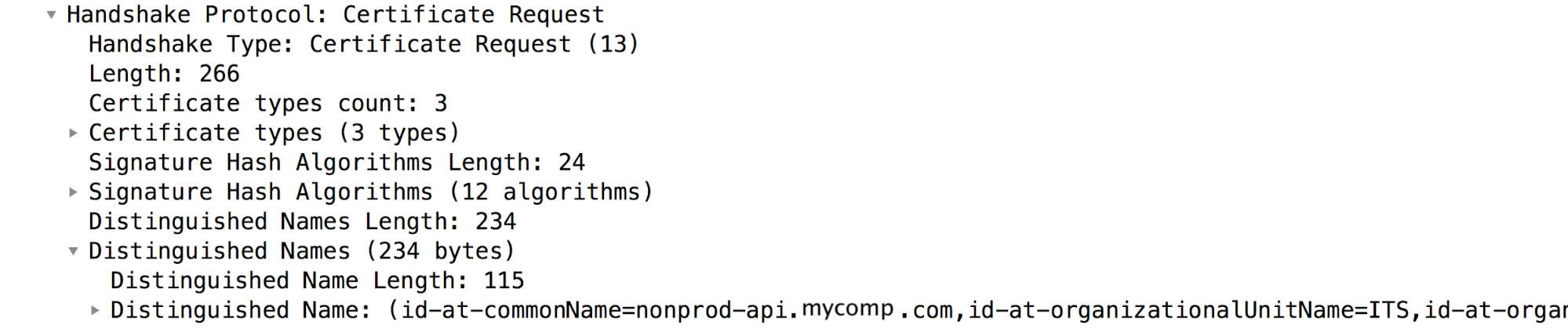

Technique #2: Check the

Certificate Requestpacket in TCP/IP Packets, where the backend server requests the Client to send its certificate:In the sample TCP/IP packets shown above,

Certificate Requestpacket is message #7. Refer to the section "Distinguished Names", which contains the backend server's Acceptable Certificate Authorities.

Verify if the Certificate Authority obtained in step#3 matches with the list of backend server's accepted Issuers or Certificate Authorities obtained in step #4. If there's a mismatch, then the Message Processor will not send the Client Certificate to the backend server.

In the above example, you can notice that the issuer of the Client's Leaf Certificate in the Message Processor's Keystore does not match any of the backend server's Accepted Certificate Authorities. Hence, the Message Processor does not send the Client Certificate to the backend server. This causes the SSL handshake to fail and backend server sends "

Fatal alert: bad_certificate" message.

Resolution

- Ensure the certificate with the issuer/Certificate Authority that matches the issuer/Certificate Authority of the Client's Leaf Certificate (first certificate in the chain) is stored in the backend server's Truststore.

- In the example described in this Playbook, the Certificate with the issuer

"issuer" : "CN=MyCompany Test SHA2 CA G2, DC=testcore, DC=test, DC=dir, DC=mycompany, DC=com"was added to backend server's Truststore to resolve the issue.

If the problem still persists, go to Must Gather Diagnostic Information.

Must Gather Diagnostic Information

If the problem persists even after following the above instructions, please gather the following diagnostic information. Contact and share them to Apigee Edge Support:

- If you are a Public Cloud user, then provide the following information:

- Organization Name

- Environment Name

- API Proxy Name

- Complete curl command to reproduce the error

- Trace File showing the error

- TCP/IP packets captured on the backend server

- If you are a Private Cloud user, provide the following information:

- Complete Error Message observed

- API Proxy bundle

- Trace File showing the error

- Message Processor logs

/opt/apigee/var/log/edge-message-processor/logs/system.log - TCP/IP packets captured on the backend server or Message Processor.

- Output of Get cert for keystore API.

- Details about what sections in this Playbook you have tried and any other insights that will help us to fastrack resolution of this issue.