Bạn đang xem tài liệu về Apigee Edge.

Chuyển đến tài liệu về

Apigee X. thông tin

Hệ thống phụ trợ chạy các dịch vụ mà Proxy API truy cập. Nói cách khác, đó là lý do cơ bản cho sự tồn tại của các API và lớp Proxy quản lý API.

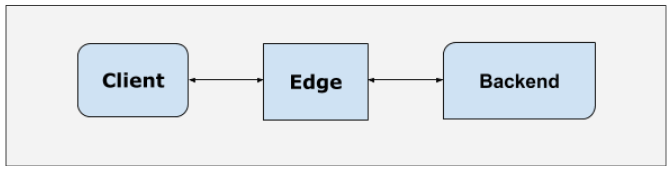

Mọi yêu cầu API được định tuyến qua nền tảng Edge đều đi qua một đường dẫn thông thường trước khi đến phần phụ trợ:

- Yêu cầu bắt nguồn từ một ứng dụng khách, có thể là bất kỳ ứng dụng nào từ trình duyệt đến ứng dụng.

- Sau đó, cổng Edge sẽ nhận được yêu cầu.

- Yêu cầu này được xử lý trong cổng. Trong quá trình xử lý này, yêu cầu sẽ chuyển sang một số thành phần được phân phối.

- Sau đó, cổng sẽ định tuyến yêu cầu đến phần phụ trợ phản hồi yêu cầu.

- Sau đó, phản hồi từ phần phụ trợ sẽ đi theo đường dẫn ngược lại chính xác thông qua cổng Edge trở lại ứng dụng khách.

Do đó, hiệu suất của các yêu cầu API được định tuyến qua Edge phụ thuộc vào cả Edge và hệ thống phụ trợ. Trong mẫu phản diện này, chúng ta sẽ tập trung vào tác động của các yêu cầu API do hệ thống phụ trợ hoạt động kém.

Cấu trúc phản mẫu

Hãy xem xét trường hợp phần phụ trợ gặp vấn đề. Sau đây là các khả năng:

Phần phụ trợ có kích thước không phù hợp

Thách thức trong việc hiển thị các dịch vụ trên các hệ thống phụ trợ này thông qua API là nhiều người dùng cuối có thể truy cập vào các dịch vụ đó. Từ góc độ kinh doanh, đây là một thách thức đáng mong muốn, nhưng cần phải được giải quyết.

Nhiều khi, các hệ thống phụ trợ không được chuẩn bị để đáp ứng nhu cầu gia tăng này đối với các dịch vụ của chúng, do đó, các hệ thống này có kích thước không phù hợp hoặc không được điều chỉnh để phản hồi hiệu quả.

Vấn đề với phần phụ trợ "có kích thước không phù hợp" là nếu có sự gia tăng đột biến về số lượng yêu cầu API, thì điều này sẽ gây áp lực cho các tài nguyên như CPU, Tải và Bộ nhớ trên các hệ thống phụ trợ. Cuối cùng, điều này sẽ khiến các yêu cầu API không thành công.

Phần phụ trợ chậm

Vấn đề với phần phụ trợ được điều chỉnh không đúng cách là sẽ rất chậm để phản hồi mọi yêu cầu đến phần phụ trợ đó, do đó dẫn đến độ trễ tăng lên, hết thời gian chờ sớm và trải nghiệm khách hàng bị giảm sút.

Nền tảng Edge cung cấp một số tuỳ chọn có thể điều chỉnh để tránh và quản lý phần phụ trợ bị chậm. Tuy nhiên, các tuỳ chọn này có giới hạn.

Tác động

- Trong trường hợp phần phụ trợ có kích thước không phù hợp, việc tăng lưu lượng truy cập có thể dẫn đến các yêu cầu không thành công.

- Trong trường hợp phần phụ trợ bị chậm, độ trễ của các yêu cầu sẽ tăng lên.

Phương pháp hay nhất

- Sử dụng tính năng lưu vào bộ nhớ đệm để lưu trữ các phản hồi nhằm cải thiện thời gian phản hồi của API và giảm tải trên máy chủ phụ trợ.

- Giải quyết vấn đề cơ bản trong các máy chủ phụ trợ bị chậm.